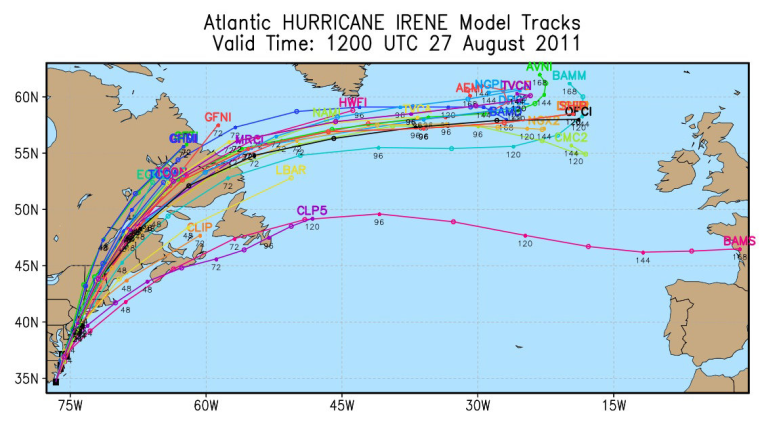

Something I've been wondering about hurricane tracking maps is if the various weather services are working together with essentially the same information and everyone wants to predict the path of the storm as accurately as possible, how is it that the models don't agree? What's the difference between them that they can take the same variables and come up with different results. Why even have competing models?

In other words, why so spaghetti?

As you might have guessed, part of the answer is that my question is itself not really correct. The most complete (and mostly over my head, technically) answer I found was in the National Hurricane Center's Technical Summary of the National Hurricane Center Track and Intensity Models (pdf).

First, they don't all use the same variables, mostly because all variables are not created equal and some data takes longer to gather and process and while that's happening it's possible to do some predicting with the data that already exists. And that means that the various models aren't competing with each other, they're complementing each other.

That said, here's my layman's view of hurricane models as described in the NHC paper.

The models are designed to evaluate the track of the storm or the intensity of the storm, or some combination of the two. They are furthermore categorized as being either early or late. Early models are based on simpler calculations so they are produced more quickly. Late models come later because the complexity of their calculations require much longer to process. When you see a guy like meteorologist Bill Karins on msnbc excitedly announcing new models, that's in part because he's been waiting for them to process and knows that they may be more comprehensive. Some of these models come out only two or four times a day.

By the way, the words they use for the accuracy of these models is "skillful" and "unskillful." Often there is a trade-off between skillfulness and speed.

The two main categories of models are again based on the differences in the data they employ. Dynamical, or numerical models make use of the kinds of physical data that comes from radars and weather stations. Statistical models instead draw upon history and experience with past hurricanes and other weather patterns to guess at how the current storm will behave. And then some models represent a mix, perhaps with some data weighted more heavily than others, like a weather mutual fund.

Some models have specialties, like assessing the impact of interaction with land, or being espeically skillful in the short term versus the long term forecast.

There's also something called a trajectory model, which sounds to me like a "go with the flow" model, extending the path drawn by more data intensive models.

The models themselves aren't named "statistical" and "dynamical." Instead they have names like the Geophysical Fluid Dynamics Laboratory model, marked on a spaghetti graph as GFDL. The one the National Hurricane Center emphasizes is their own official forecast, marked with OFCL. I'm guessing that's why it's more distinct as the only black on in the list on the right.

Naturally, if you have any expertise in this material I greatly appreciate you sharing your insights.